Machine Learning: Bridge from Education to Career, a talk at AWS Machine Learning University, March 3, 2022. The slides can be accessed here.

Tag: AWS

Interview at KZSB “Money Talk” on AI

KZSB radio interview from Monday May 15, 2023, on AI/ML, and especially ChatGPT. Link to Apple Podcast.

KVTA 1590 interview on Cloud and AI

May 8, 2023, with Tom Spence, on KVTA 1590

The podcast of the entire KVTA Morning Show with Spence can be found here and my presentation starts 3hrs and 38min into the show (3:38).

Keynote speaker at KES2022

I am excited to join a list of distinguished keynote speakers at KES2022!

My abstract: In this talk we are going to examine Data Analytics in the Cloud. In particular, we will demonstrate the data flow for Analytics and Machine Learning, starting with sourcing data (structured, semi-structured and unstructured), pipelining it in both batch mode and streaming, storing it in a Data Lake, and finally making it available to Machine Learning and Business Intelligence. We will illustrate this complex engineering process with examples from Amazon Web Services (AWS).

AWS Solutions Architect – Professional certification

Last week I took and passed the AWS Solutions Architect – Professional certification. As to be expected, this is a challenging exam that assumes detailed familiarity with the AWS cloud. However, it is also a very satisfying exam precisely because it goes in depth.

The difficulty is not conceptual, in that the questions reflect the knowledge a computer scientist ought to have; that is, given enough time each question can be answered with strong familiarity with the AWS cloud domains: compute, databases, storage and – especially – networking. The challenge of the exam is twofold: the questions are detail oriented; for example, that Router 53 requires a Type A record for a CloudFormation distribution, but a CNAME record for RDS. And, as there are 75 questions and 3 hours, each question can take at most 2 min and 15 sec. It takes me about 1.5 min to read the question and the answers carefully, and so 45 sec are left to make a decision where one answer is perhaps the outlier, but the other 3 answers are all correct, except that one of them is ideal give the phrasing of the question (e.g., optimize cost or performance efficiency). The point is that the exam tests in depth familiarity with a wide range of services.

How to study for this exam? I am interested, professionally, in the process of exam preparation. I looked briefly at an array of online courses related to this certification: Udemy, TutorialsDojo, O’Reilly, Cantrill and A Cloud Guru. I am impressed by the quality of the materials: videos, slides, tutorials, labs, practice exams. Unfortunately, I was able to set aside very little time to prepare for this exam, and watching long videos was not an option. I preferred to read white papers and AWS console documentation.

One of my favorite books in Computer Science is an old classic: An Introduction to Programming in Emacs Lisp, by Robert J. Chassell. This is such an enjoyable text; yet, the author admits that one of the reviewers of that book wrote the following:

I prefer to learn from reference manuals. I “dive into” each paragraph, and “come up for air” between paragraphs.

I find myself in the same place now, where I have to regularly absorb vast amounts of information, and I prefer succinct presentations without pedagogical aids; just the essential material to be ingested quickly without distractions. That is how I prepared for this exam, using the AWS white papers and other technical documentation.

CSUCI Partnership with AWS Academy

Cloudifying the Curriculum with AWS

My paper, Cloudifying the Curriculum with AWS, was presented at Frontiers in Engineering (FiE) 2021, in Lincoln, Nebraska, October 14, 2021.

Abstract: This is an Innovate Practice Full Paper. The Cloud has become a principal paradigm of computing in the last ten years, and Computer Science curricula must be updated to reflect that reality. This paper examines simple ways to accomplish curriculum cloudification using Amazon Web Services (AWS), for Computer Science and other disciplines such as Business, Communication and Mathematics.

This paper is an expanded version of a technical report (see here). In particular, it contains material related to teaching Machine Learning (see here).

At the same conference, FiE 2021, I was also part of a panel with Joshua C. Nwokeji, Myra Roldan, and Terry Holmes, on A Hands-on Approach to Cloudifying Curriculum in Computing and Engineering Education.

Abstract: The term cloud computing is used to describe a pool of configurable virtualized computing resources (databases, software, storage, network, servers, etc.) delivered as a service via the Internet. The demand for cloud computing professionals continues to increase, yet academic institutions make little effort to integrate cloud computing into the computing curriculum. Lack of cloud computing resources has been identified as a major hindrance to teaching and learning cloud computing in higher education. This workshop aims to explore resources that could help computing educators teach cloud computing to students. Through hands-on activities, participants will learn about the various resources, tools, and technologies that are freely available for teaching and learning cloud computing.

Enrollment Predictions with Machine Learning

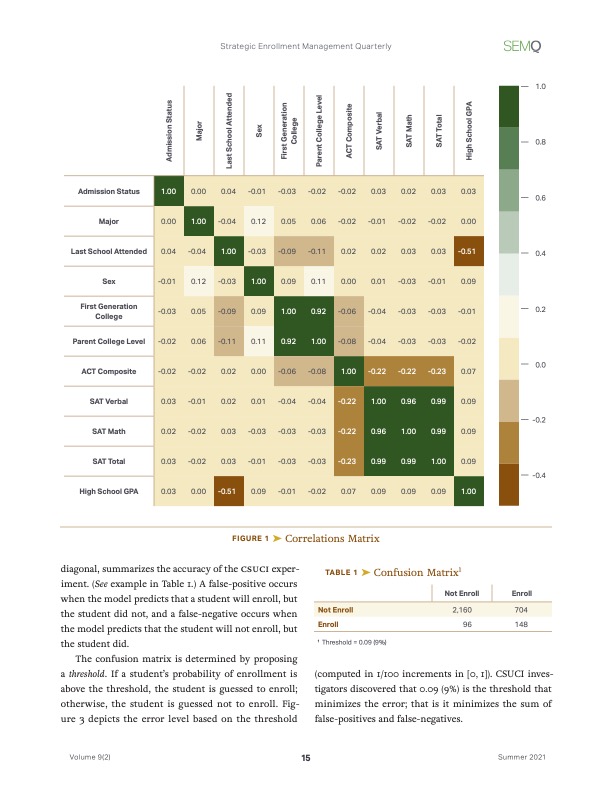

Happy to announce that our joint paper, Enrollment Predictions with Machine Learning, co-authored with Hung Dang, Ginger Reyes Reilly and Katharine Soltys, has appeared in Volume 9, number 2, of the Strategic Enrollment Management Quarterly (SEMQ).

In this paper a Machine Learning framework for predicting enrollment is proposed. The framework consists of Amazon Web Services SageMaker together with standard Python tools for Data Analytics, including Pandas, NumPy, MatPlotLib and Scikit-Learn. The tools are deployed with Jupyter Notebooks running on AWS SageMaker. Based on three years of enrollment history, a model is built to compute — individually or in batch mode — probabilities of enrollments for given applicants. These probabilities can then be used during the admission period to target undecided students. The audience for this paper is both SEM practitioners and technical practitioners in the area of data analytics. Through reading this paper, enrollment management professionals will be able to understand what goes into the preparation of Machine Learning model to help with predicting admission rates. Technical experts, on the other hand, will gain a blue-print for what is required from them.

This paper has been made possible in part by the AWS Pilot Program in Machine Learning where California State University Channel Islands was one if the participating institutions.

AWS Machine Learning certification

The AWS Machine Learning (ML) certification is a demanding exam that requires the mastery of AWS IT infrastructure, eg., Kinesis; Statistics, eg., Principal Component Analysis (PCA); an expert level familiarity with AWS SageMaker, a one-stop shop for ML in the AWS console; and modeling with a large variety of algorithms, e.g., XGBoost, K-NN, Linear Learner, etc. In short it requires background in Machine Learning, especially in model tuning, in Statistics, and in the AWS cloud. This post is aimed at those who wishes to both learn the practice of ML in the AWS Cloud, and prepare for the AWS Speciality ML certification.

I suspect that there are many Computer Scientists, IT professionals, Business leaders, and others, who are drawn to the field of ML by the ubiquity of its applications, and by the fact that the cloud opened up the practice of ML to anyone who is interested. I was first introduced to ML by Professor Jan Mycielski, at the University of Colorado at Boulder, who gave me his copy of Perceptrons by Marvin Minsky and Seymour Papert, written in 1969 with a second printing in 1972. The subtitle of Perceptrons is An Introduction to Computational Geometry, and the emphasis of this visionary book (pun intended), which laid the foundations of ML, is on what we would call today object recognition. Indeed, the book’s approach is through the problem of computer vision. Many of the mathematical ideas proposed in the book lay dormant for years, as the hardware needed to run the required computation did not exist at first, and later was the domain of a few scientists with access to mainframes. But today, thanks to the economies of scale of computing allowed by the cloud, anyone can set up a ML training job for a few hundred dollars. Of course, this led to an explosion of the field, and its great applicability in medicine, finance, weather predictions, recommender systems, etc., attracted both enthusiasts and professionals. In my academic and consulting work I have been asked to contribute to problem solving with ML, and the AWS ML Pilot which graciously invited our campus to participate, as well as a recent paper I co-authored on applications of ML to enrollment, spurred me to learn more about ML. A great place to start is the AWS ML certification, as it requires both a theoretical understanding of the field (attractive to academics) and an understanding of the ML pipeline based on AWS tools (attractive to practitioners).

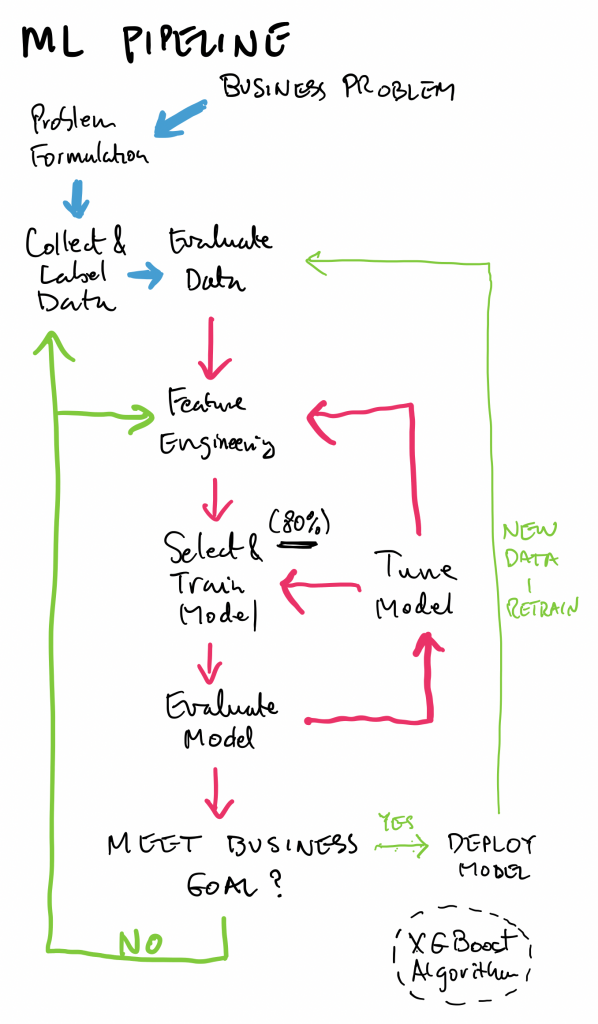

ML Pipeline

A good way to study for the ML certification is to follow the ML pipeline, which consists of four main stages, which coincide with the four domains of the exam guide (Specialty MLS-C01 Exam Guide v1.2):

- Data Engineering (20%)

- Exploratory Data Analysis (24%)

- Modeling (36%)

- ML Implementation and Operations (20%)

The percentages indicate the fraction of the exam (65 questions total) dedicated to the given domain. As can be seen, Modeling, consisting of stats, algorithms, tuning and evaluation, is the largest portion. This sets apart the ML certification from other AWS certifications, as it includes a fair amount of theoretical material.

Data Engineering

This domain covers how to create data repositories, eg., a Data Lake, how to ingest data into the repository, and then how to transform the raw data in the repository into data that can be analyzed. This last step is known as a data cleaning operation.

An example of a typical solution is an S3 bucket that hosts the Data Lake, a massive dump collecting data from various sources. This data is then worked on by an Extract-Transform-Load (ETL) application hosted on an Elastic Map Reduce (EMR) cluster. The type of work done here consists in taking the data from a heterogenous set of sources (JSON files, text files, Relational Data Base dumps, images, etc.), and making the data uniform, conforming to a set of conventions described by a table of items (rows) with attributes (columns). The ETL application is typically something like Apache Spark or Apache Hadoop. The data is then written back into the S3 bucket hosting the Data Lake (or possibly a new S3 bucket). The data is now ready, and commonly living in a Pandas table, for consumption by the second stage of the pipeline: Exploratory Data Analysis.

If an exam question has qualifiers such as “set up with little effort” or “minimal management,” this may be an indication that Glue, a serverless data solution, might be preferable to setting up an EMR. This blog post describes how to create a Glue based pipeline.

Here is an interesting blog post on how to preprocess input data before making predictions using a SageMaker Inference Pipelines and Scikit-learn.

Exploratory data analysis

In this stage, data is sanitized. This can mean, for example, taking a variety of date formats (eg., January 23, 2021, 01/23/2021, 23-1-21, etc.) and making them uniform; or, dealing with missing or incomplete data.

ML takes numeric data only, and so all columns have to contain numbers. This may mean taking ordinal data (such as large, medium, small) or nominal data (such as colors), and representing it with numerical data. A subtle point here is that large, medium, small may be translated into 3,2,1, as the numbers represent the order, but the same should not be done with colors where there is no natural ordering to them. In the case of colors, we may want to use one-hot encoding: replace the column with color names with a set of columns with headings for the different colors, as in this example:

| Color | Red | Blue | Green |

| Red | 1 | 0 | 0 |

| Blue | 0 | 1 | 0 |

| Green | 0 | 0 | 1 |

There are two techniques for dealing with missing data:

- Remove rows or columns containing missing data

- Impute missing values, that is, interpolate the missing values by replacing them with the mean of the column, or simply zero, or a little bit more advanced, using regression, very commonly linear regression, which assumes that the data can be estimated well from a linear combination of the existing entries. We will take the opportunity here to mention an excellent source to learn ML: Dive into Deep Learning, and see the 3.1. Linear Regression.

Once the data is sanitized and resides in a table it is possible to perform feature engineering on it. Note here that there are two related terms: attributes and features. Attributes come with the raw data; they are given. Features, on the other hand, are those attributes we select to run the training process. Part of feature engineering is to select appropriate attributes for features.

For example, in detecting fraudulent credit card transactions, it may not be necessary to include the transaction id, which may be generated randomly and carry no information needed to train a model. Thus we drop the transaction id attribute, i.e., it does not become a feature. Another example may be when predicting school enrollments (which students end up coming in the fall based on their applications), it may not be necessary to have both SAT and ACT scores; the two scores may be highly correlated, in which case we only need one of them to train the model. The correlation of two attributes may be found visually with a correlation matrix or a scatter matrix.

The process of removing attributes and/or combining them is called dimensionality reduction, and there are two techniques for it:

- Principal Component Analysis (PCA)

- t-Distributed Stochastic Neighbor Embedding (t-SNE)

It may be confusing that PCA and t-SNE are ML algorithms, in fact unsupervised ML algorithms, which are also used to prepare data for ML training. There are difference between PCA and t-SNE, discussed, for example, in this StackExchange post.

Modeling

As with any advanced field in the sciences, it is good to be familiar with the nomenclature, in particular the difference – in the context of ML – between algorithm, model and framework:

- Algorithm: In general, algorithms are step by step instructions that turn inputs into outputs. Algorithms are a basic object of study of Computer Science, and they have been defined formally (see for example Knuth’s The Art of Computer Programming, Volume 1). In the context of ML, algorithms take as input your data and output a model. For example, in the case of the Linear Regression algorithm, the input is a set of observations, and the output is a linear function with a bias. The SageMaker algorithms, which are the algorithms needed for the exam, can be found here.

- Model: the interpretation of the data obtained for the sake of predictions, computed with an algorithm. The quality of a model depends on the selection of an appropriate algorithm, the fine tuning process, and the testing.

- Framework: it is a set of software tools, libraries and interfaces used to compute models with algorithms. For example, the textbook Dive into Deep Learning, proposes three frameworks: MXNET, PYTORCH and TENSORFLOW, all three frameworks are open source, developed by Apache, Facebook and Google, respectively. SageMaker supports all three frameworks, as well as others that can be seen here.

We should also add tensors to the list useful definitions. There seems to be confusion regarding what is a tensor. The confusion may arise from the generality of the concept; many mathematicians have learned the concept in a book such as Spivak’s Analysis on Manifolds; physicists use tensors in mechanics. For the sake of ML, the most useful way to conceptualize tensors is as a generalization of the sequence: scalar (0-dim tensor), vector (1-dim tensor), matrix (2-dim tensor), and now a “cube matrix”, indexed by (i,j,k) is a 3-dim tensor, etc. Indeed they are called ndarray in MXNet. Tensors facilitate the designation of linear transformations in n-dimensional vector spaces. See here for more.

The primary interface to SageMaker is the Jupyter Notebook, a development environment popular with Data Analysts. A Jupyter notebook has a kernel which is the computational engine on which the notebook runs, and both Python and R are natively supported on SageMaker notebooks. When opening a new Jupyter Notebook in SageMaker, the user can select a kernel which supports a given framework.

The first step in the area of Modeling is to cover the different SageMaker algorithms, all listed here. The Table: Mapping use cases to built-in algorithms in the linked document is especially useful, as it lists the algorithms according to use cases. We summarize them here:

- Supervised Learning:

- Binary or Multi-class classification; eg., a spam filter

- Regression; eg., estimating home values

- Time Series Forecasting; eg., predict sales

The algorithms here are DeepAR for Time Series, and for the first two, classification and regression, they are: Factorization Machines, K-NN, LL, XGBoost

- Unsupervised Learning:

- Feature Engineering (PCA); eg., combine several features into one (component)

- Anomaly Detection (RCF); eg., find outliers that make model training more difficult, since the “regular” points without outliers lends themselves to a simpler model

- Embedding high to low dimension (Object2Vec)

- Clustering or Grouping (K-Means); for discrete groupings within data

- Topic Modeling (organize docs into topics not known in advanced: LDA, NTM)

- Textual Classification:

- Text classification into pre-defined categories (BlazingText)

- Translation, summary or speach2text (Seq2Seq)

- Image Processing:

- Image and multi-label classification

- Object detection and classification

- Computer Vision, as in self-driving cars

Hyperparameters are values given to a particular ML algorithm that control its runtime: size of steps, constants, batch sizes, etc. Choosing the right hyperparameters is an art form, and it is acquired by experience, and explained to some extent here. Note that just as we used ML for dimensionality reduction, we can also use ML to tune hyperparameters – this is done with Baysian search and explained in the link just given. In order to help with the understanding of hyperparameters, I recommend reading Linear Regression Implementation from Scratch, where basic hyperparameters such as learning rate, minibatch size, epoch and gradient ascent rate are explained with great examples.

A framework has been selected, and algorithm chosen, a model constructed; how do we know the quality of the model? We need to be able to test against some data that was not used in the training, but for which we know the targets. To that end, it is customary to train the model on 80% of the data, and reserve 10% for validation, used while building the model, and 10% for testing, done at the end. Let’s concentrate on the supervise binary classification case.

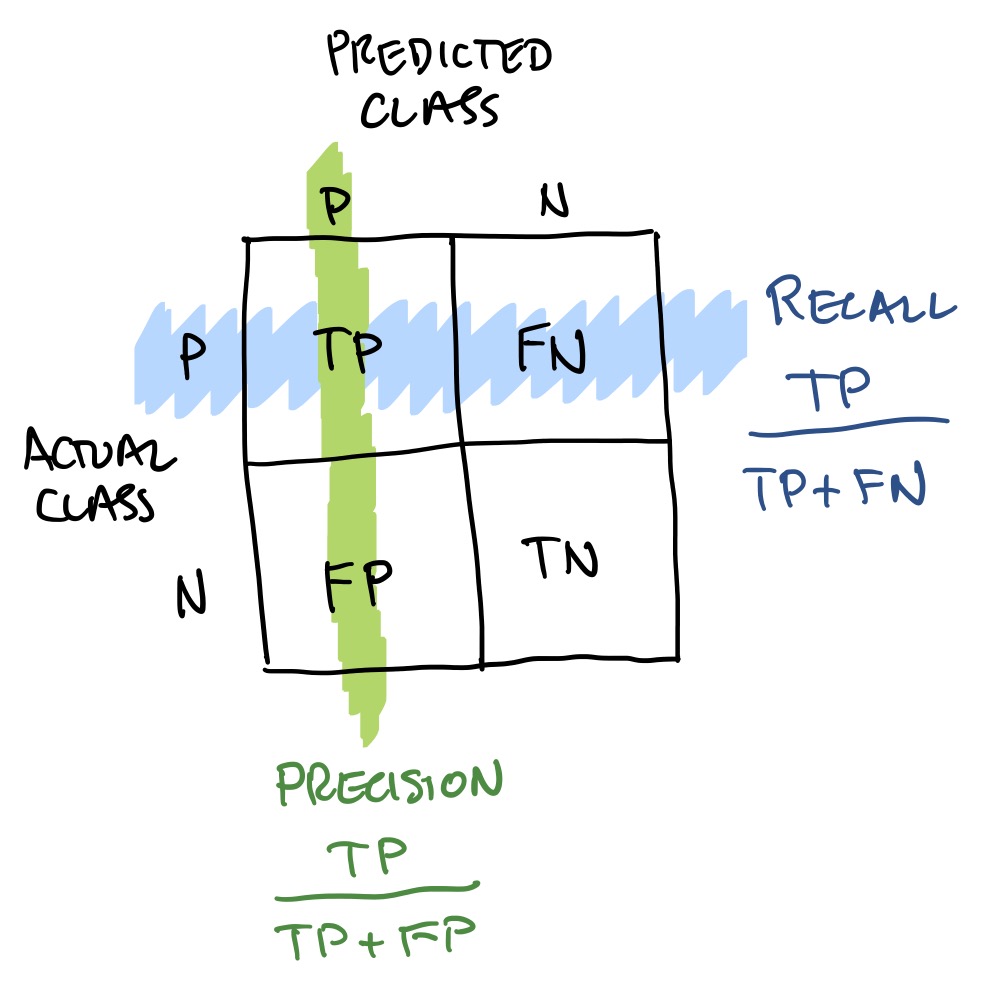

The first step of testing is to build a confusion matrix, which counts how many True Positives (TP), False Negatives (FP), False Positives (FP) and True Negatives (TN) there are. For example, TP are those items which were predicted as positive by the model, and were actually positive; FP are those items which were predicted positive erroneously by the model, since they are in fact negative. There are several metrics associated with a confusion matrix. In the diagram to the left we show Precision, which is TP/(TP+FP), and Recall, which is TP/(TP+FN). Imagine that our model is classifying MRI images according to whether cancer is present or not. It is desirable to identify all images with cancer, even at the cost of having some false positives (the argument being that a dramatic scare is preferable to a tragic neglect of treating a cancer). Thus, in this case high recall can be striven for at the cost of a moderate precision. Note that both recall and precision have a value in [0,1], and a recall close to 1 implies a FN close to zero. There are various other metrics well explained here; Wikipedia has an intro to confusion matrices (note they flipped actual and predicted classes relative to ours).

Implementation and operations

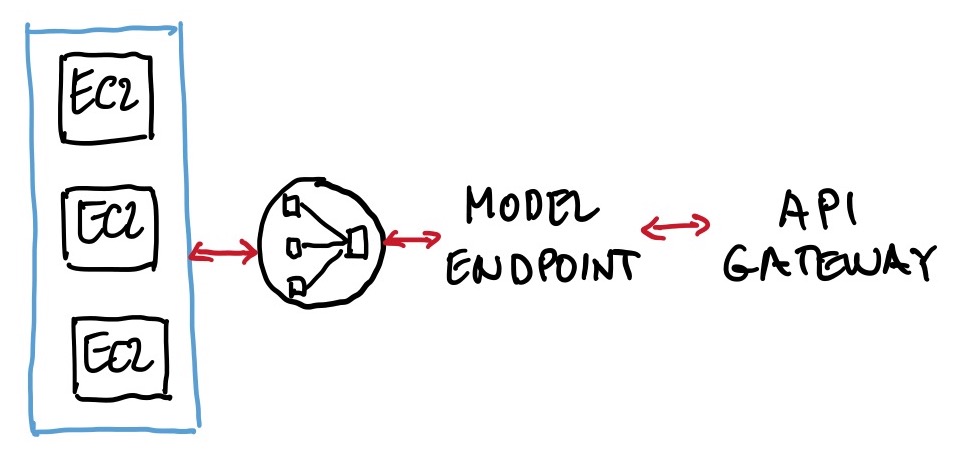

A ML model exists, and now we want to deploy it. In this domain Containers make an appearance, and some familiarity with the concept is necessary (containers are covered in detail in the AWS Developing certification). Another fundamental deployment concept is that of an endpoint. An endpoint is where we attach the model once ready to make inferences; it is a fully managed service that allows real-time inferences via a REST API (see here and here).

The components of a typical deployment, going right to left in the left figure, is an API managed by AWS API Gateway, that connects to a model endpoint, created with a single line of code in, say, a Python SDK, which then connects to a load balancer which distributes the queries to the model hosted in containers on EC2 instances, possibly spread in several Availability Zones, and part of an auto-scaling group. Note that when a SageMaker model is being trained it is housed in a container, and then the same container, but now with parameters set post-training, is used in the deployment. Also note that endpoints are flexible, in the sense that it takes relatively little effort to have more than one model behind an endpoint (using a shared serving container), with A/B testing supported. The multi-model deployment on a single endpoint, rather then several endpoints, is a good answer to a question that emphasizes low deployment cost, as it reduces deployment overhead since SageMaker manages loading models in memory and scaling them based on the traffic patterns (see here and here).

The above endpoint would work on sporadic queries, but what if the batch or streaming predictions are required? For batch predictions, raw data may be put in an S3 bucket, transformed by an ETL process (EMR + Apache Spark, or Glue), and using a batch transform ingested by the model (see here; note that one of the advantages of batch transform is that you can feed batch data to a model without deploying a persistent endpoint). For streaming predictions, data may be ingested by Kinesis, which connects with SageMaker.; see this blog post. Also see this blog post on Kinesis ingestion of video streams.

Exam Resources

- AWS official Machine Learning certification site

- AWS Ramp-Up Guide: Machine Learning

- AWS Certified Machine Learning – Specialty (MLS-C01) Exam Guide

- Exam Prep for AWS Certified Machine Learning Specialty

- GitHub repository with AWS SageMaker examples

- Great sequence of AWS YouTube videos on SageMaker

- The Elements of Data Science on AWS Training and Certification is very good

- Rules of Machine Learning: Best Practices for ML Engineering

Tips for educators to master virtual instruction | AWS Public Sector Blog

As educators, we need to approach the transition to online teaching as permanent change and innovate for the future. At California State University, we have moved to virtual instruction repeatedly throughout the last five years for a variety of reasons. I encourage educators to have an online version for all your classes, not only for emergencies, but also to be responsive to students who want online offerings.

In this AWS Public Sector Blog post I discuss how to:

- Leverage technology to replace face-to-face interaction.

- Make the tech work for you.

- Get creative.

- Throw out the rulebook.

- Change the way you approach grading.

- Balance organization with passion.

- Bonus tip for computer science instructors: Some material is easier to teach online.

From: https://aws.amazon.com/blogs/publicsector/tips-for-educators-to-master-virtual-instruction/